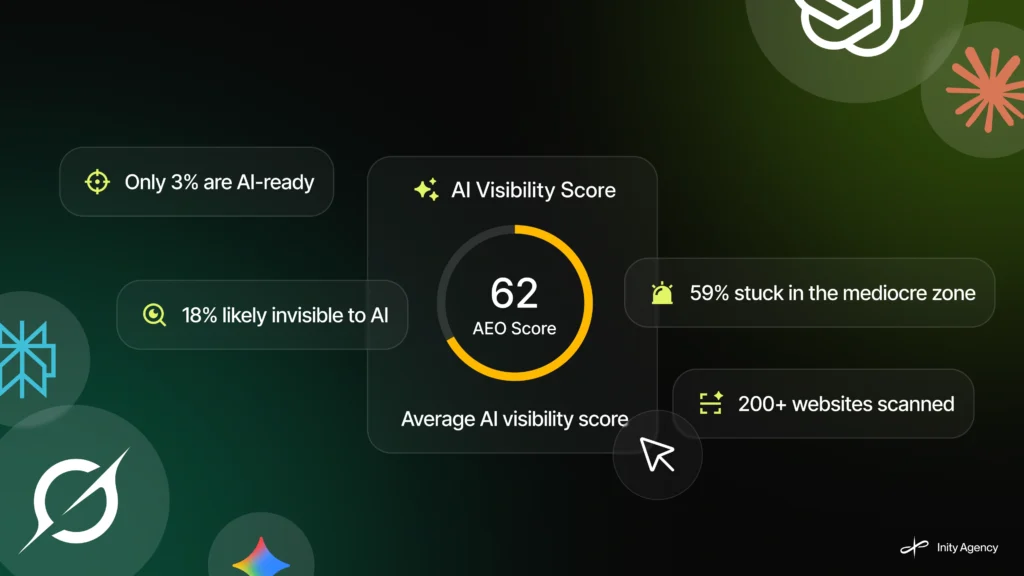

We Scanned 200+ Sites for AI Visibility. Only 3% Passed.

We built AEO Checker to answer one question: how visible is your website to AI engines like ChatGPT, Perplexity, Google AI Overviews, and Gemini? After 211 real scans from real users across real websites, the data is in – and the picture isn’t good. Average score: 61.9 out of 100. Only 7 websites crossed 90. Zero scored below 31. At Inity Agency, this is what we found.

What Did 211 AEO Scans Actually Reveal?

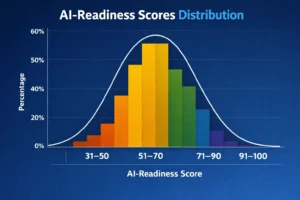

After 211 scans using the Inity AEO Checker, the average AI visibility score across all websites was 61.9 out of 100 – firmly inside what the data shows as the “mediocre zone.” Only 7 websites scored above 90. No website scored below 31. The most common score was 58. The spread tells a clear story: almost nobody is completely broken, but almost nobody is actually AI-ready.

Here’s the full distribution:

| Score Range | Sites | % of Total | What It Means |

|---|---|---|---|

| Below 31 | 0 | 0% | Fully broken – no sites here |

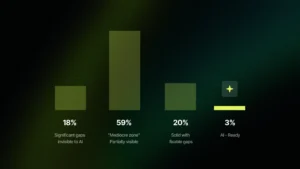

| 31–50 | 38 | 18% | Significant gaps – likely invisible to AI |

| 51–70 | 123 | 58.3% | The mediocre zone – partially visible, inconsistently cited |

| 71–90 | 43 | 20.4% | Solid but fixable gaps remain |

| 91–100 | 7 | 3.3% | Actually AI-ready |

The 58.3% clustered in the 51–70 range is the most telling finding. These aren’t bad websites. Many of them rank on Google and have clean design. They’re just not structured for the way AI engines read and cite content.

What Does an Average Score of 61.9 Actually Mean?

A score of 61.9 out of 100 means the average website is partially visible to AI engines – enough to be indexed, but not enough to be consistently cited. AI engines like Perplexity and ChatGPT can access the site, but they struggle to extract clean, citable answers. The site exists in the AI’s knowledge space as a blurry signal rather than a clear source.

To put 61.9 in context: websites in this range typically have some schema markup but significant gaps, content that’s readable but not structured for direct answers, and entity presence that’s inconsistent across the web. They show up in AI-generated responses occasionally – but not reliably, and not as a primary citation.

Which Types of Websites Scored Highest – and Which Scored Lowest?

The 7 websites that scored above 90 shared common traits: complete schema markup including FAQPage and Organization with sameAs attributes, content structured with direct answers under every heading, and full AI crawler access confirmed across GPTBot, PerplexityBot, ClaudeBot, and others. The lowest-scoring sites – some scoring 31–38 – were missing all four of these layers simultaneously.

One finding that consistently surprises people: Google.com scored 44. Amazon.in scored 38.

These are two of the most visited websites on the internet. They rank for everything. They have enormous domain authority. And they score below average on AI visibility — because AI optimization is a fundamentally different target than traditional SEO. A website built to rank on Google was built for a different system than a website built to be cited by ChatGPT or Gemini.

This isn’t a criticism of Google or Amazon. It’s a signal about where the industry is right now: even the best-optimized traditional websites weren’t built with AI engines in mind.

What Is Breaking Most Websites’ AI Visibility?

The 4 failure patterns that appear consistently across 200+ scans are: incomplete schema markup (exists but missing critical properties), quietly blocked AI crawlers in robots.txt, content structured for human reading rather than AI extraction, and weak entity presence outside the website itself. These four issues rarely appear alone – most sites in the mediocre zone have all four simultaneously.

Here’s how each failure pattern works in practice:

1. Schema Markup Exists – But Is Incomplete

Most websites in the 51–70 range have some schema markup. That’s actually the problem – it creates a false sense of security. The Organization schema is present but missing the SameAs property that connects the website to verified external entities (LinkedIn, Wikidata, Crunchbase). The Service schema is absent. FAQPage schema is missing even when a visible FAQ section exists on the page.

AI engines use schema as a trust signal. Incomplete schema is worse than a clear signal in some respects – it tells the AI engine the site is trying to communicate structured data but isn’t doing it correctly.

2. AI Crawlers Are Quietly Blocked

This is the most invisible problem – and one of the most common. A website can look perfectly functional to humans and rank normally on Google while having GPTBot, PerplexityBot, or ClaudeBot disallowed in robots.txt. This typically happens because robots.txt was last updated years ago with broad disallow rules, and no one thought to check whether the new generation of AI crawlers was included.

The result: the site has zero AI visibility not because of content or schema problems, but because the AI engine was never allowed through the door.

3. Content Isn’t Structured for AI Extraction

AI engines extract answers differently than humans read content. A human reads a paragraph and synthesizes meaning from context. An AI engine looks for a direct, self-contained answer immediately after a heading – ideally in 40–60 words that can be cited without surrounding context.

Most websites are written for human comprehension. Paragraphs are long. Answers are buried three sentences into a section. Headings are written for curiosity rather than clarity. This content works for readers. It doesn’t work for Perplexity or Google AI Overviews trying to extract a citable response to a specific query.

4. Entity Clarity Is Weak

AI engines build a picture of who a business is by cross-referencing sources: the website, LinkedIn, Crunchbase, Google Business Profile, Wikidata, industry directories. When those sources are inconsistent – different descriptions, different service categories, different founding dates – the AI builds a blurry picture and defaults to more clearly defined alternatives.

A technically excellent website with inconsistent off-site presence will lose citations to a less sophisticated website whose entity is clearly established across multiple sources.

Why Can a Website Score 95+ on Lighthouse and 55 on AI Visibility?

A website can score 95+ on Google Lighthouse and still score 55 on AI visibility because Lighthouse measures technical web performance – load speed, accessibility, code quality – while AI visibility measures content structure, schema completeness, crawler access, and entity clarity. These are different optimization targets built for different systems.

Lighthouse was designed to measure how well a website performs for human users and traditional search engine crawlers. It doesn’t evaluate whether GPTBot can access the site, whether FAQPage schema exists, or whether content is structured so Gemini can extract a direct answer. A site can pass every Lighthouse audit and still be effectively invisible to the AI layer of the web.

This is the core insight from the 211-scan dataset: the old standards don’t cover the new requirements. Most of the websites in the mediocre zone were built correctly – for 2020. The optimization target has shifted.

How to Move From the Mediocre Zone to AI-Ready

Moving a website from the 51–70 range into the 80+ range typically requires four targeted fixes – none of which require rebuilding the site:

- Audit and complete schema markup – add FAQPage schema to every page with a FAQ section, add SameAs to Organization schema linking to LinkedIn, Crunchbase, and Google Business Profile, add Service schema for each service offered

- Audit robots.txt for AI crawler access – check that GPTBot, PerplexityBot, ClaudeBot, and GoogleBot-Extended are not disallowed; update the file if they are

- Restructure key content sections – rewrite H2 sections to include a direct 40–60 word answer immediately below the heading, before any elaboration

- Build entity consistency off-site – ensure your business name, description, service categories, and founding information are consistent across LinkedIn, Crunchbase, Google Business Profile, and at least two industry directories

Sites that address all four move from an average score of 61.9 to the 80–90 range in a single implementation sprint. The 90+ range requires consistent execution across all four layers simultaneously – which is why only 3.3% of scanned sites achieved it.

What Separates the 3% That Passed?

The 7 websites that scored above 90 out of 211 scanned sites had one thing in common: every layer of AEO was implemented correctly and completely, not just partially. Schema markup was complete with all required properties. AI crawlers had confirmed access. Content included direct answer paragraphs under every major heading. Entity presence was consistent across multiple external sources.

None of the 90+ sites were large enterprise sites. They were typically focused, well-maintained websites where someone had deliberately optimized for AI visibility – not as an afterthought, but as a design decision from the start.

Interestingly, amongst five sites scoring exactly 95 – the dataset’s highest score – was AEO Checker tool itself . That’s not a coincidence. It’s the score that results when you build a page specifically to demonstrate what AI-ready looks like.

Conclusion

The 211-scan dataset confirms what Inity Agency suspected when building AEO Checker: most websites are operating in a visibility gap that traditional optimization tools don’t measure and traditional SEO frameworks don’t address. The average score of 61.9 isn’t a failure of web development – it’s a predictable result of building for the old standard while AI engines operate on new requirements. The 4 failure patterns – incomplete schema, blocked crawlers, unstructured content, weak entity clarity – are fixable. None require a rebuild. They require knowing where to look.

Frequently Asked Questions

The average AI visibility score across 211 websites scanned with Inity AEO Checker was 61.9 out of 100. The median score was 61. The most common score was 58. Only 7 websites scored above 90, and no website scored below 31 – meaning virtually every site has some baseline visibility, but almost none are fully AI-ready.

Ready to Build Your SaaS Product?

Free 30-minute strategy session to validate your idea, estimate timeline, and discuss budget

What to expect:

- 30-minute video call with our founder

- We'll discuss your idea, timeline, and budget

- You'll get a custom project roadmap (free)

- No obligation to work with us